How It Works

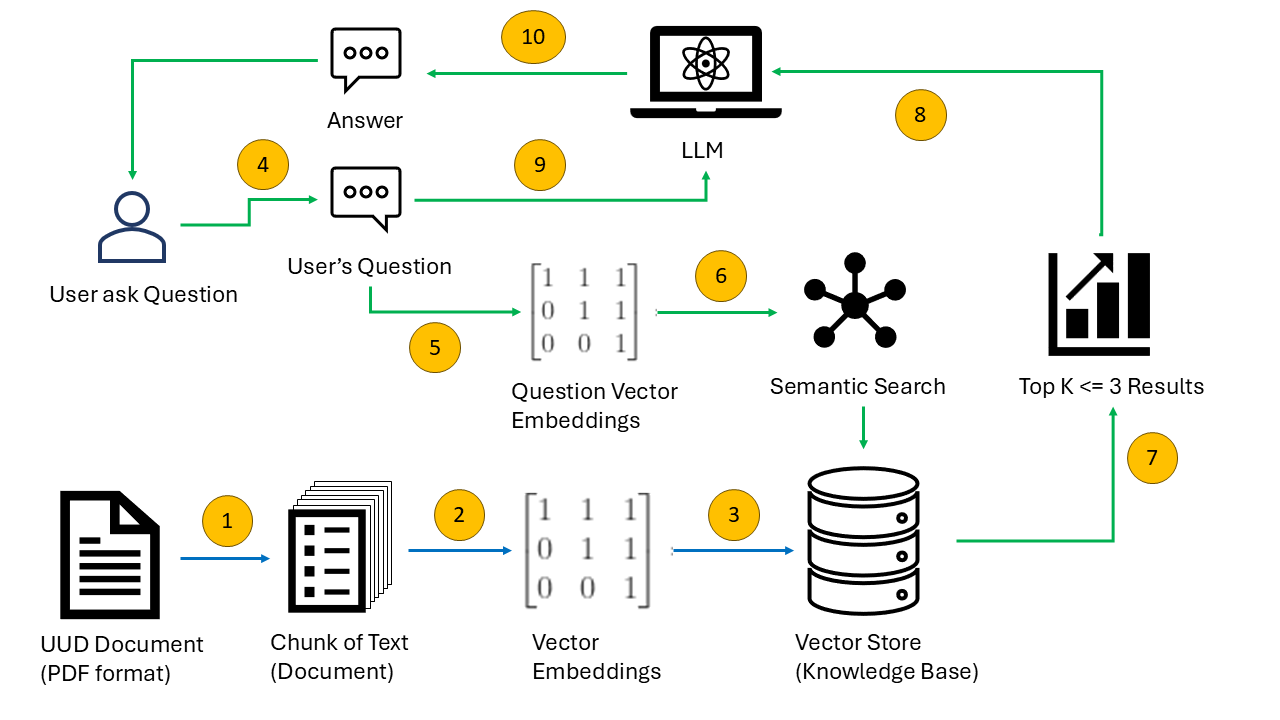

The pipeline operates in two distinct phases: an Indexing Phase that runs once to build the knowledge base, and a Query Phase that runs on every user interaction. The diagram below maps to the numbered steps in the process flow diagram.

uud45_asli.pdf page-by-page, extracting raw text with page metadata. The custom parse_uud() function splits text into semantic chunks aligned with legal structure: BAB → Pasal → Ayat.all-MiniLM-L6-v2 into an embedding vector.- Load PDF with PyPDFLoader

- Concatenate pages with page numbers

- Run

parse_uud()to generate Document chunks - Embed chunks using sentence-transformers on CUDA

- Persist vectors to

./dbvia ChromaDB

- Receive user question from Streamlit UI

- Embed query with same model

- Retrieve top-K docs (threshold: 0.7)

- Format context with BAB/Pasal metadata

- Generate answer with Llama 3.2, stream to UI